Losing sleep over Generative-AI apps? You're not alone or wrong. According to the Astrix Security Research Group, mid size organizations already have, on average, 54 Generative-AI integrations to core systems like Slack, GitHub and Google Workspace and this number is only expected to grow. Continue reading to understand the potential risks and how to minimize them.

"Hey ChatGPT, review and optimize our source code"

"Hey Jasper.ai, generate a summary email of all our net new customers from this quarter"

"Hey Otter.ai, summarize our Zoom board meeting"

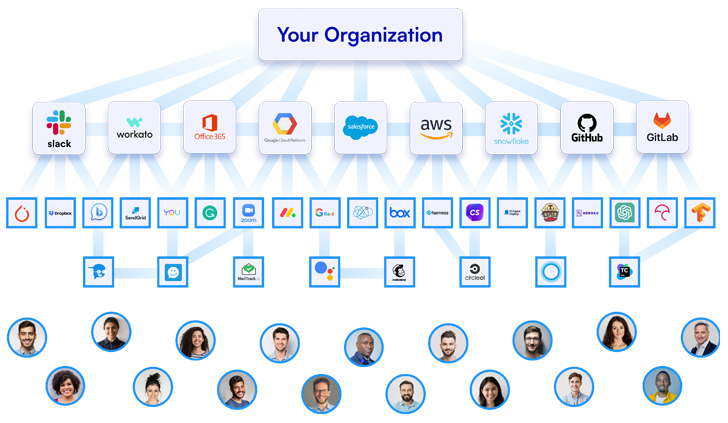

In this era of financial turmoil, businesses and employees alike are constantly looking for tools to automate work processes and increase efficiency and productivity by connecting third party apps to core business systems such as Google workspace, Slack and GitHub via API keys, OAuth tokens, service accounts and more. The rise of Generative-AI apps and GPT services exacerbates this issue, with employees of all departments rapidly adding the latest and greatest AI apps to their productivity arsenal, without the security team's knowledge.

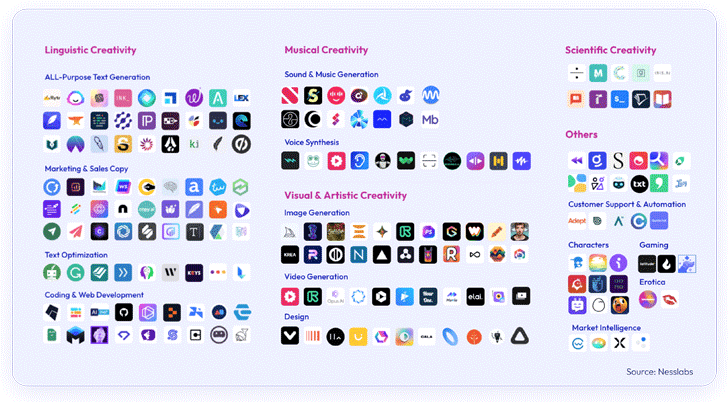

From engineering apps such as code review and optimization to marketing, design and sales apps such as content & video creation, image creation and email automation apps. With ChatGPT becoming the fastest growing app in history, and AI-powered apps being downloaded 1506% more than last year, the security risks of using, and even worse, connecting these often unvetted apps to business core systems is already causing sleepless nights for security leaders.

|

| Your organization's app-to-app connectivity |

The risks of Gen-AI apps

AI-based apps present two main concerns for security leaders:

1. Data Sharing via apps like ChatGPT: The power of AI lies in data, but this very strength can be a weakness if mismanaged. Employees may unintentionally share sensitive, business-critical information including customers PII and intellectual property like code. Such leaks can expose organizations to data breaches, competitive disadvantages and compliance violations. And this is not a fable - just ask Samsung.

The Samsung and ChatGPT leaks - a case for caution

Samsung reported three different leaks of highly sensitive information by three employees that used ChatGPT for productivity purposes. One of the employees shared a confidential source code to check it for errors, another shared code for code optimization, and the third shared a recording of a meeting to convert into meeting notes for a presentation. All this information is now used by ChatGPT to train the AI models and can be shared across the web.

2. Unverified Generative-AI apps: Not all generative AI apps come from verified sources. Astrix's recent research reveals that employees are increasingly connecting these AI-based apps (that usually have high-privilege access) to core systems like GitHub, Salesforce and such - raising significant security concerns.

|

| The vast array of Generative AI apps |

Real life example of a risky Gen-AI integration:

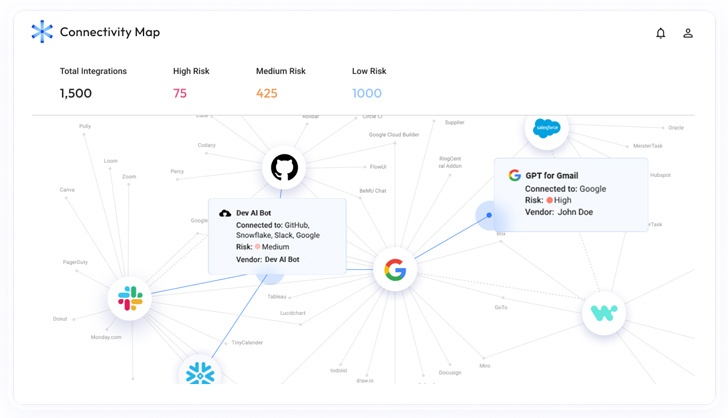

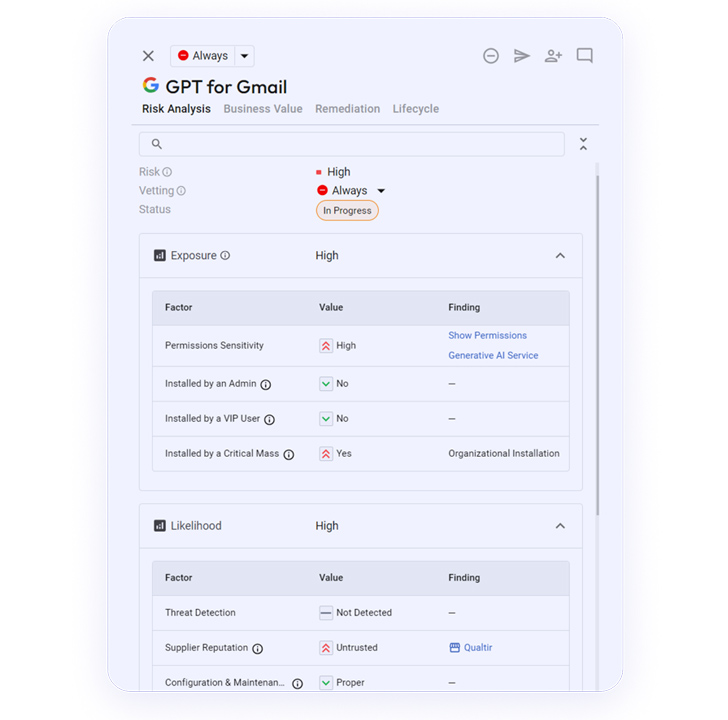

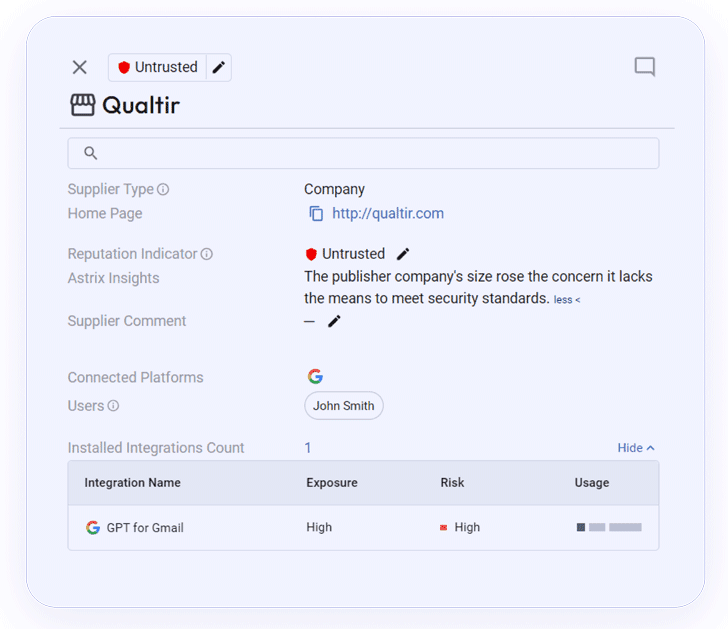

In the images below you can see the details from the Astrix platform about a risky Gen-AI integration that connects to the organization's Google Workspace environment.

This integration, Google Workspace Integration "GPT For Gmail", was developed by an untrusted developer and granted with high-permissions to the organization's Gmail accounts:

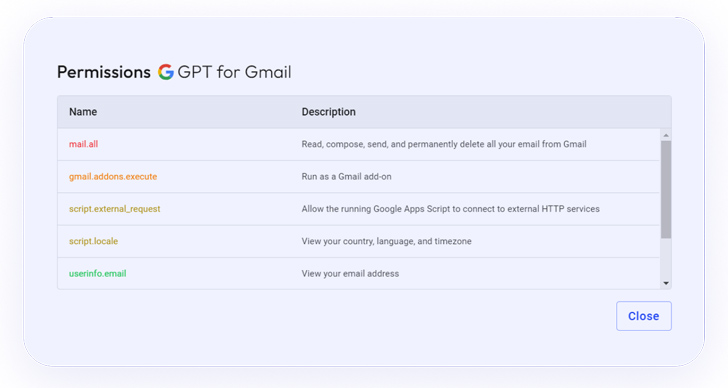

Among the scopes of the permissions granted to the integration is "mail.all", which allows the third party app to read, compose, send and delete emails - a very sensitive privilege:

Information about the integration's supplier, which is untrusted:

How Astrix helps minimizing your AI risks

To safely navigate the exciting but complex landscape of AI, security teams need robust non-human identity management in order to get visibility into the third-party services your employees are connecting, as well as control over permissions and properly evaluate potential security risks. With Astrix you now can:

|

| The Astrix Connectivity map |

Found this article interesting? Follow us on Twitter and LinkedIn to read more exclusive content we post.

.png)

1 year ago

104

1 year ago

104

Bengali (Bangladesh) ·

Bengali (Bangladesh) ·  English (United States) ·

English (United States) ·